In our second post on common usability testing mistakes, I’ll discuss running remote unmoderated studies. Of course, the ease of running an unmoderated study is its greatest advantage. However, since unmoderated testing has its own unique challenges (and opportunities), there are a few key points to consider before you get started. The following are the top three mistakes to avoid in a remote unmoderated study.

Your test is too long or complex

On one hand, not requiring a moderator is what gives you the flexibility to conduct more tests with a wider range of participants. On the other hand, it means that no one is there to actually moderate the sessions – that is, to explain scenarios or tasks, answer questions, make sure users don’t get too far off track, or ask follow-up questions. In short, to ensure everything goes smoothly.

For this reason, it’s especially important that you do a good job planning. Unmoderated studies are most effective when you keep the scope focused and define very specific research questions.

Write your tasks in the simplest, shortest way possible. Make sure the conclusion of the task is clear-cut, since users will have to be their own judge of whether or not they have completed it.

Do not include more than five tasks. Keeping the study relatively short will not only reduce the possibility of user error but also contribute to higher completion rates.

For more guidance on planning tests, see our previous post, “Top Three Mistakes to Avoid When Planning a Usability Test.”

Nevertheless, even the best planning can’t account for everything. This leads to the second most common mistake.

You didn’t run a pilot

The best way to ensure your sessions run smoothly on their own is to test your test!

First of all, go through it yourself. Closely review how you describe your scenarios and tasks, looking for any leading language or unintentional disclosures as well as spelling errors and typos.

Ask a colleague who is unconnected to the test to try it out, too. A fresh pair of eyes will be better equipped to judge if everything is clear.

Once you’re satisfied with your internal review, run a pilot with a sample of 5-10 users. A pilot will uncover any major problems with your test design before you conduct it on a larger scale, saving time and money.

In addition, reviewing the pilot sessions will reveal whether or not your tasks are providing useful results. This brings us to the third point.

You aren't iterating

When you start watching your pilot sessions, you will likely see improvements that can be made – either to your study design or to your prototype or product itself. The ease of running remote unmoderated testing allows you to make these changes right away, before continuing with more sessions. By making use of findings immediately, you will get fresh insight out of your subsequent tests instead of hearing the same feedback repeatedly.

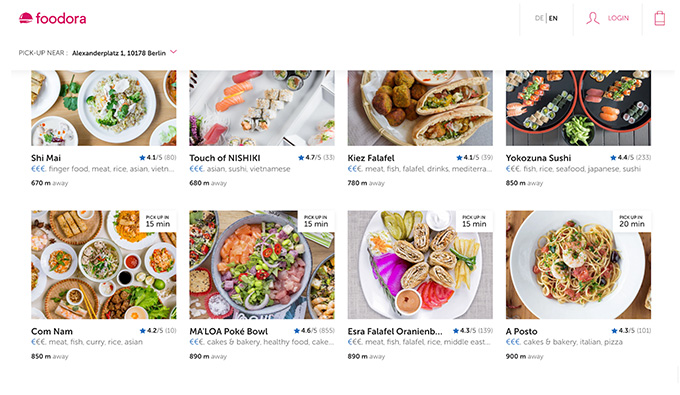

As an example, imagine you are testing the following food delivery site:

You have recently released a feature that allows users to choose pick-up instead of delivery, and are testing a UI element that informs users how far the restaurant is from their current location (“780 m away”). Your objective is to determine if this element is helpful when users are deciding what to order.

You defined a task that asked users to locate a certain sushi restaurant, then answer a follow-up question stating how far away the restaurant is.

When reviewing your first sessions, you immediately realize that the task is not actually answering your question about whether this feature is helpful or not; rather, it only tells you if users notice it.

This is your cue to revise the task. You rewrite it to simply ask users to find something they would like to order for dinner and pick up from the restaurant. By phrasing the task in this way, you do not explicitly lead the user to check the distance; you can see whether or not it is important to them as they make their choice.

Stay tuned

So far, we’ve covered the most important mistakes to avoid so that planning and running your study go smoothly. In the next post, we’ll discuss some of the errors that people frequently make in the analysis and reporting phase.