Usability testing is based on a simple premise – watch someone use your product – and anyone can learn how to do it. Nevertheless, it does take some learning. In this three-part series, I’ll review some of the most common errors that both beginners and seasoned researchers make in usability testing. First up, we’ll take a look at where things can go wrong in the planning phase.

You don’t have a clear objective

Many people know that they ought to test, but don’t stop to clearly define what they hope to learn from it beyond “Are there any problems?” This lack of focus usually leads to results that are not very useful.

Just like your product needs to have specific goals about what it does and for whom in order to be able to serve them, your test needs to have specific goals in order to serve you. If the starting point for your study is too general, it will be hard to define tasks that will produce concrete findings.

One way to define better objectives for user research is to start with stakeholder research. Conduct interviews or hold a workshop with the people involved in the project. Use these conversations to learn what questions they have that could be answered by the study, as well as gather what they know or believe about users. All too often we assume we are on the same page as our colleagues when there is actually a lot we could learn from each other.

It’s also a good idea to look for any past research that could be relevant, as well as checking analytics data. Reviewing everything you already know will help you define targeted, well-informed research questions.

When you consider what you want to know, think concretely in terms of what you’re working on and how it will affect your development. What do you need to know to make design decisions?

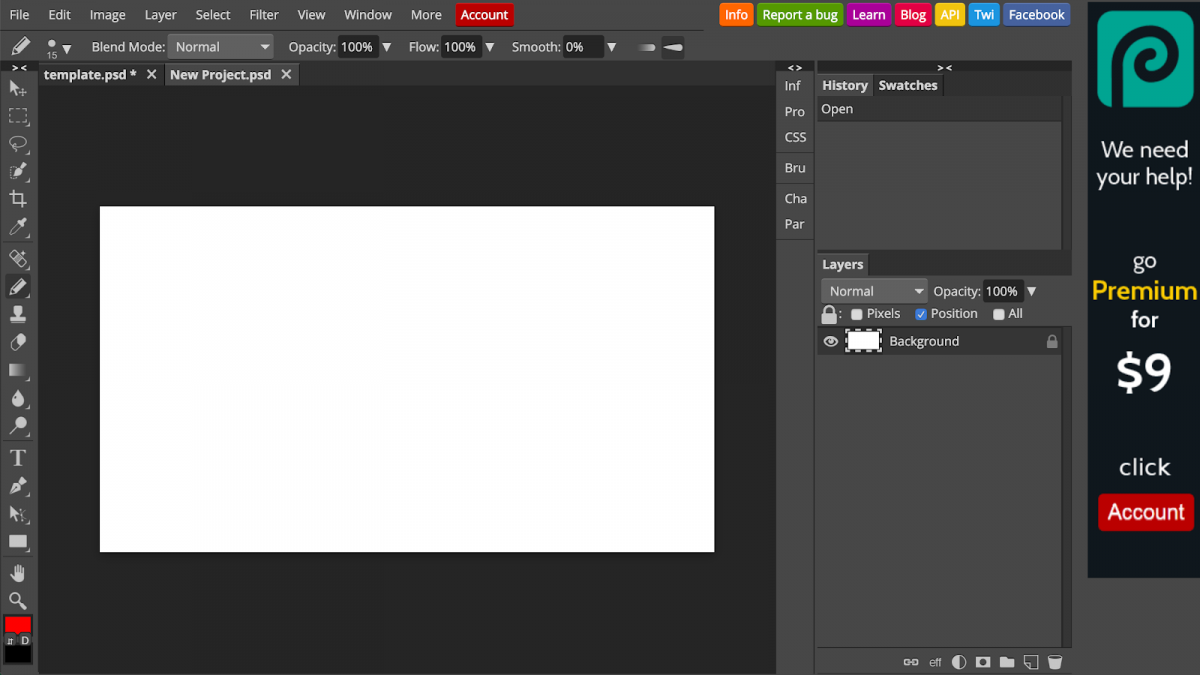

As an example, imagine you want to run a usability study for this browser-based Photoshop alternative, photopea:

Some general research questions that initially occur to you might be:

Is it easy to use?

Do users like it?

Is there anything confusing about it?

These are too vague to plan a study around. To make the test productive, look at what your team is working on. Maybe the pen tool has just been added, and additional features are planned for it. This could lead to specific questions like:

For which kind of drawing tasks do users prefer the pen, and for which the pencil?

Can users easily locate the pen?

Do beginners understand what the handles of pen drawing points control?

When investigating this kind of specific questions, you will also get feedback on the more general questions about if users like it and are able to use it.

Your scope is too broad

Teams often make the mistake of pushing usability testing back further and further in the development process, until a project is just about completed and has never been put before any potential users. At that point, you will probably realize there are a lot of design features you’d like to evaluate.

Unfortunately, it is too late to test everything. (This is one reason usability testing should be conducted regularly and iteratively, rather than in one huge study. We’ll talk more about this in a later post.)

Just as the individual research questions need to be focused, so does the overall scope of the study. If you try to cover every aspect of a complex product or website, you will have less time to explore any one question in detail, leading to a less meaningful assessment overall. Moreover, you run the risk of exhausting your participants.

Your tasks are poorly written

While the concept of usability testing is simple, it’s not always so simple to write tasks.

First, you need to think of tasks that will get answers to the research questions you defined.

Next, you need to write them in a way that is open-ended and does not lead participants down any particular path. Most obviously, this means not using the same language that appears in your user interface. Instructions should leave room for the user to decide how to accomplish the task. It also helps to provide some context, i.e. why they are doing it.

Don’t make any individual task so long that it’s hard to remember. Work on how you phrase the instructions so that they are as simple and to-the-point as possible.

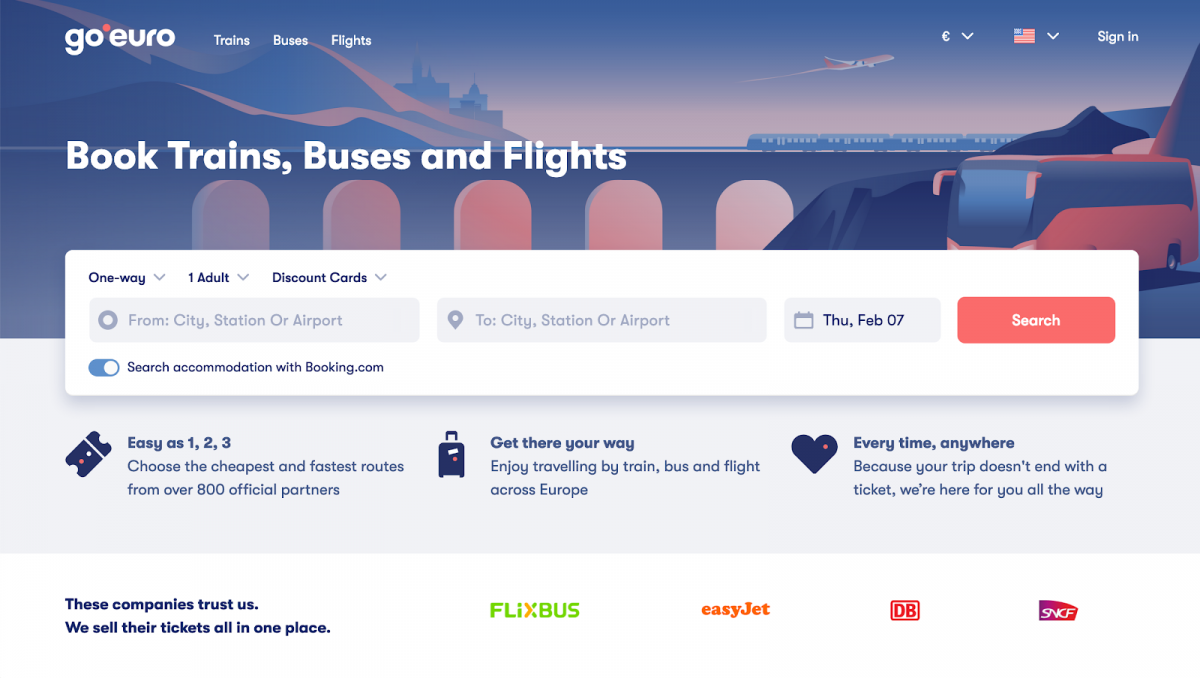

As an example, imagine you want to test the search process on the following travel booking site:

A poorly written task would be, “Search for a round-trip flight for two people from Berlin to Barcelona and select the fastest trip.” This description tells the user exactly what to do, using the same language as the website.

A better way to evaluate the same behavior would be, “Imagine you’re planning a weekend city break with a friend. Find a trip that interests you.” This task simulates a real scenario in which a user would be searching for a ticket and does not give away any clues about exactly what to type or what button to click. It produces more realistic results.

Stay tuned

Keeping these points in mind will ensure your study has a strong foundation. In the next post in this series, we’ll look at common mistakes in running remote unmoderated tests.